What Is Artificial Intelligence (AI)?

Artificial Intelligence (AI) is a technology that enables computers and machines to simulate human-like learning, understanding, problem-solving, decision-making, creativity, and autonomy. Applications and devices powered by AI can recognize and identify objects, understand and respond to human language, learn from new information and experience, provide detailed recommendations to users or experts, and even act independently without human intervention (a classic example being a self-driving car). As of 2024, most AI researchers, practitioners, and news focus on generative AI—a form of AI that can create original text, images, video, and other content. To fully understand generative AI, it’s essential to first understand the technologies it’s built on: machine learning (ML) and deep learning (DL).Machine Learning (ML)

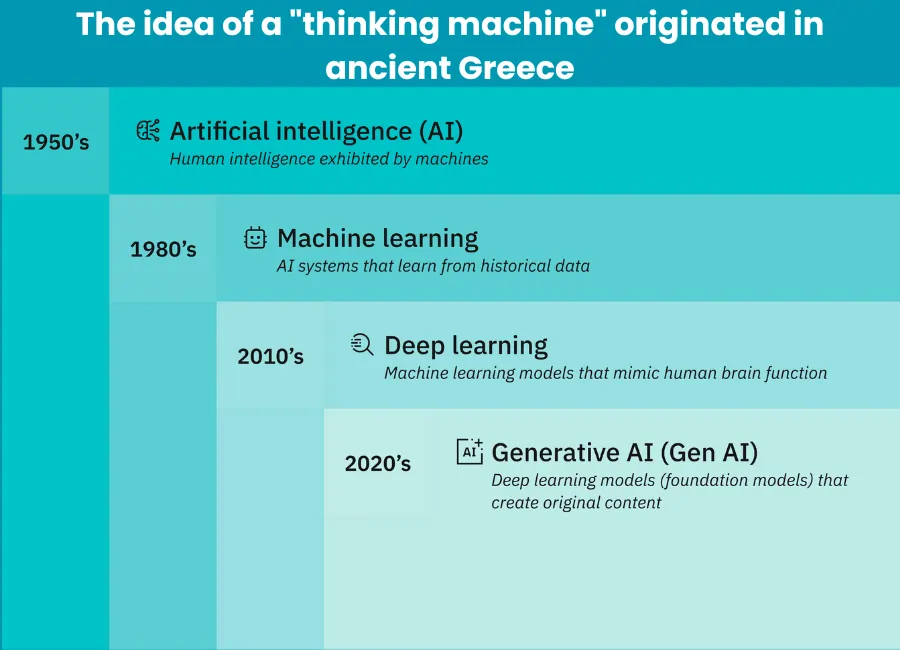

AI can be imagined as a series of interconnected concepts developed over more than 70 years:

The foundation of AI is machine learning, which involves building models based on data and algorithms to make predictions or decisions. It encompasses a wide range of techniques that enable computers to learn and draw conclusions from data without being explicitly programmed for specific tasks.

One of the most popular types of machine learning algorithms is the neural network. These algorithms mimic the structure and function of the human brain. A neural network consists of interconnected nodes that work together to process and analyze complex data. Neural networks are particularly well-suited to tasks that involve identifying complex patterns and relationships in large datasets.

The simplest form of machine learning is supervised learning, which uses labeled datasets to train algorithms to classify data or predict outcomes accurately. In supervised learning, humans pair each training example with the correct output label.

Deep Learning (DL)

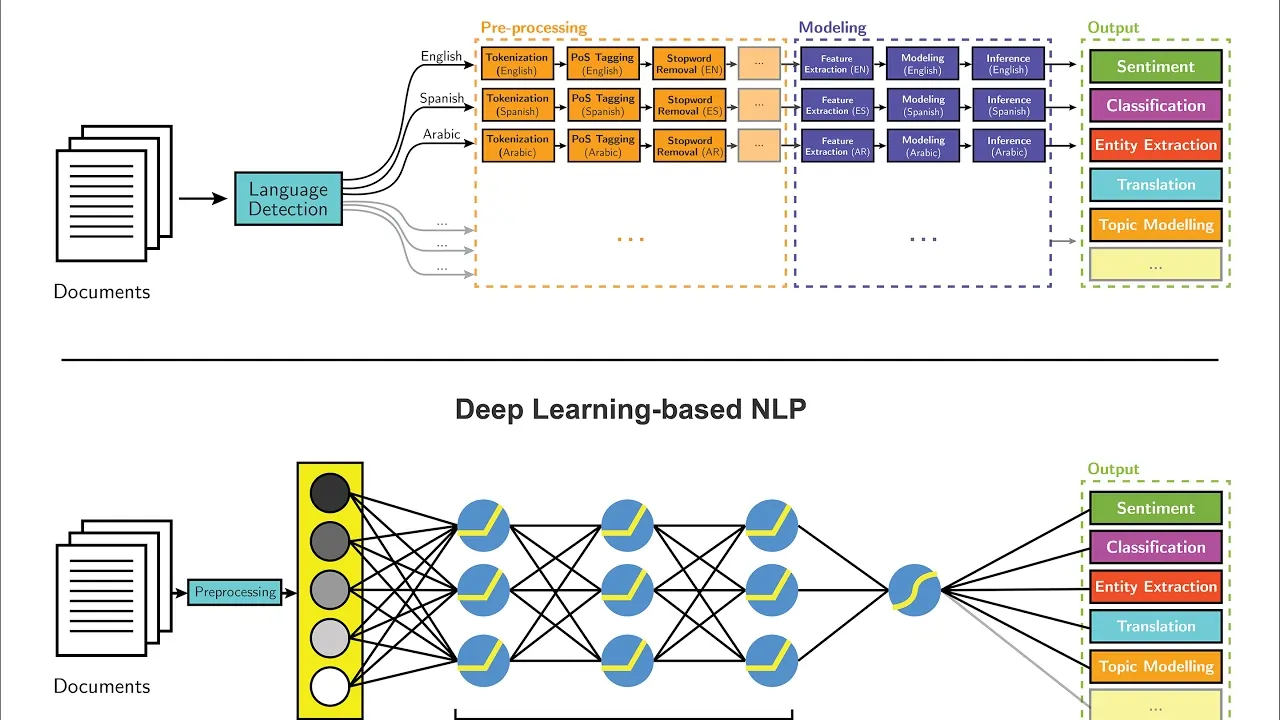

Deep learning is a subfield of machine learning that uses multilayered neural networks, called deep neural networks, to more accurately simulate the complex decision-making ability of the human brain.

Unlike traditional machine learning models that typically use only one or two hidden layers, deep neural networks include at least three input layers and often hundreds of output layers.

These layers allow for unsupervised learning: they can automatically extract features from large, unstructured, and unlabeled datasets and independently make predictions about what the data represents.

Because deep learning does not require human intervention, it dramatically expands the scalability of machine learning. It is especially well-suited for tasks like natural language processing (NLP), computer vision, and other challenges that involve identifying complex patterns and connections in massive datasets quickly and accurately. A form of deep learning is at the core of most AI applications we use today.

Generative Artificial Intelligence (Generative AI)

Generative artificial intelligence, often called “Gen AI,” refers to deep learning models capable of producing complex, original content—such as long-form text, high-quality images, realistic video or audio, and more—based on user prompts or input.

Generally, generative models encode a simplified representation of their training data and then use that representation to generate new output that resembles the original data but is not identical.

Generative models have long been used in statistics to analyze numerical data. However, over the last decade, they’ve evolved to handle and generate more complex forms of data. This evolution coincides with the emergence of three advanced types of deep learning models:

- Variational Autoencoders (VAEs), introduced in 2013, enabled the creation of models that could generate multiple variations of content based on a request or instruction.

- Diffusion Models, which first appeared in 2014, are designed to learn the diffusion process of a dataset and generate new elements that follow a similar distribution to the original dataset.

- Transformers (Transformer Models), which are trained on sequential data to generate extended sequences (such as words in sentences, shapes in images, video frames, or programming commands). Transformers are the foundation of today’s most popular generative AI tools, including ChatGPT, GPT-4, Copilot, BERT, Gemini, and Midjourney.

How Generative Artificial Intelligence Works

In general, generative AI operates in three phases:

- Training – to build the foundation model

- Fine-tuning – to adapt the model for specific applications

- Generation, Evaluation, and Further Optimization – to refine performance over time

Training

Generative AI begins with a foundation model—a deep learning model that serves as the base for various generative AI applications.

The most common type today is the large language model (LLM) for generating text. However, there are also foundation models for generating images, video, audio, music, and even multimodal models that support multiple types of content.

To create a foundation model, specialists train a deep learning algorithm on massive volumes of raw, unstructured, and unlabeled data—such as terabytes or even petabytes of text, images, or video from the internet.

The training process produces a neural network with billions of parameters—encoded representations of patterns and relationships in the data—that can autonomously generate content in response to prompts. This is the foundation model.

This training process is computationally intensive, time-consuming, and expensive. It typically requires thousands of GPUs and weeks of training time, often costing millions of dollars. Open-source foundation model projects—like Meta’s LLaMA-2—help Gen AI developers avoid this cost.

Fine-tuning

Next, the model must be adapted to a specific content generation task. This can be done in several ways:

Fine-tuning, which involves feeding the model labeled datasets specific to a domain—such as questions or requests with ideal answers in a desired format

Reinforcement Learning from Human Feedback (RLHF), in which humans evaluate the model’s outputs for accuracy or relevance and provide feedback so the model can improve. This may involve users submitting edits or corrections directly to a chatbot or virtual assistant.

Generation, Evaluation, and Further Tuning

Developers and users continuously evaluate the results generated by their Gen AI applications and adjust the models—sometimes weekly—for better accuracy and relevance. In contrast, the foundation model itself is updated much less frequently, typically every year or 18 months.

Another way to enhance a generative AI application is Retrieval-Augmented Generation (RAG), which expands the foundation model with access to external, up-to-date sources to improve output precision and relevance.

Benefits of Artificial Intelligence

Artificial intelligence enhances the efficiency, speed, and scalability of many business processes. Organizations use AI to reduce costs, minimize human error, improve customer experience, generate creative ideas and content, and make more accurate predictions.

Key benefits of AI include:

- Process Automation – AI tools, like robotic process automation (RPA), streamline repetitive tasks—such as data entry, document processing, and customer support—freeing up employees to focus on more creative or strategic work.

- Speed and Efficiency – AI models work much faster than humans and can process huge amounts of data in a fraction of the time.

- Accuracy and Consistency – AI reduces the chance of human errors and maintains consistent quality of output.

- Scalability – AI can operate 24/7, handling an unlimited number of tasks or requests simultaneously.

- Cost Reduction – By automating tasks and optimizing decision-making, AI reduces labor and operational costs.

- Enhanced Customer Experience – AI chatbots, personalized recommendations, and predictive support improve customer satisfaction.

- Innovation and Creativity – Generative AI tools assist in creative fields such as design, writing, coding, and music composition.

- Better Decision-Making – AI models can analyze trends and patterns in big data to support smarter business strategies.

Real-World Use Cases of Artificial Intelligence

AI is used in almost every industry today. Here are some practical examples:

Healthcare

- Early diagnosis using image recognition (e.g., detecting tumors in MRI scans)

- Virtual health assistants that answer patient questions

- Drug discovery and personalized treatment recommendations

Retail & E-Commerce

- Product recommendation engines

- Chatbots for 24/7 customer support

- Inventory management and demand forecasting

Finance

- Fraud detection using anomaly detection algorithms

- Algorithmic trading

- Credit scoring and risk assessment

Manufacturing

- Predictive maintenance of machinery

- Quality control with computer vision

- Supply chain optimization

Media & Content Creation

- Automatic transcription and translation

- AI-generated articles, images, or videos

- Personalized content feeds and summarization

Education

- AI tutors that provide personalized learning paths

- Automated grading of assignments

- AI-powered language learning tools

Cybersecurity

- Real-time threat detection and response

- AI-based fraud detection systems

- Behavior analysis for identifying potential risks

Challenges and Ethical Concerns of AI

Despite its benefits, AI also brings significant risks and concerns that society must address:

Main Challenges

- Bias in AI Models

If training data is biased, the AI system may produce discriminatory or unfair results—especially in sensitive areas like hiring, lending, or law enforcement. - Misinformation and Deepfakes

Generative AI can be used to create realistic fake content—such as videos, voices, or texts—posing risks for disinformation, scams, or reputational damage. - Job Displacement

Automation may replace certain roles, especially repetitive or rule-based jobs, raising concerns about unemployment and the need for upskilling. According to Toptal’s Q4 2025 Market Report, experience is becoming a critical differentiator in the AI era: while demand for senior professionals has seen strong growth, generalists and entry-level workers are facing weaker demand. - Security Risks

AI systems can be exploited for cyberattacks or other malicious purposes, and some models can leak sensitive data if not properly managed. - Lack of Transparency (Black Box Problem)

It’s often hard to explain how AI models arrive at a decision, which raises accountability issues, especially in legal, financial, or healthcare settings. - Environmental Impact

Training large AI models consumes significant energy and computing resources, contributing to carbon emissions.

Ethics in Artificial Intelligence

To use AI responsibly, developers, companies, and policymakers must prioritize AI ethical principles. These include:

- Fairness – Ensuring that AI does not reinforce discrimination

- Transparency – Making AI decisions understandable and explainable

- Privacy – Respecting user data and securing it properly

- Accountability – Assigning responsibility for the actions and outcomes of AI

- Sustainability – Building energy-efficient and environmentally friendly AI models

- Human-Centered Design – Designing AI tools to support, not replace, human intelligence and values

Major organizations—including the EU, UNESCO, OECD, and ISO—are developing global ethical frameworks and standards for AI to ensure it’s used in a fair, safe, and transparent manner.

Conclusion

Artificial intelligence has transformed how we live, work, and interact with technology. Its rapid development has unlocked new possibilities in nearly every industry—from healthcare to creative fields.

However, alongside these benefits, it also brings new responsibilities. Ensuring that AI is used ethically, fairly, and transparently is essential for building a future where technology empowers people, rather than replacing or harming them.